|

Konyushkova et al. introduce a data-driven approach to meta-learning termed Learning Active Learning. Given the reinforcement learning paradigm, the model is a policy with two actions (labeling and requesting labels) and thus can effectively make decisions with few labeled examples. More specifically, the authors considered the online setting of active learning, where the agent is presented with examples in a sequence and must choose whether to label the example or request the correct label. Woodward and Finn combine meta-learning with reinforcement learning to learn an active learner. Previous active-learning methods rely heavily on heuristics such as choosing the data points whose label the model is most uncertain about, choosing the data points whose addition will cause the model to be least uncertain about other data points, or choosing the data points that are different compared to others according to a similarity function. This learning paradigm leverages an existing classifier to decide which unlabeled data points that need to be labeled to improve the existing classifier the most. Active-learning is valuable in cases where the initial amount of labeled data is small - which frequently happens in the messy real-world. In particular, active-learning with meta-learning is potentially a research direction with many industry use cases. These problems happen in safety-critical domains such as autonomous vehicles and medical imaging, in exploration strategies for meta reinforcement learning, and in active-learning.

For example, there are various meta-learning problems that are not entirely determined by their data distribution, as their underlying functions are ambiguous given some prior information. In many situations, we need more than just a point estimate. 1 - The Downsides Of Deterministic Meta-Learningĭeterministic meta-learning approaches provide us with p(ϕᵢ|Dᵢᵗʳ, θ) - a point estimate of the task-specific parameters ϕᵢ given the training set and the meta-parameters θ. Note: The content of this post is primarily based on CS330’s lecture 5 on Bayesian meta-learning. How can we design neural-based Bayesian meta-learning algorithms?

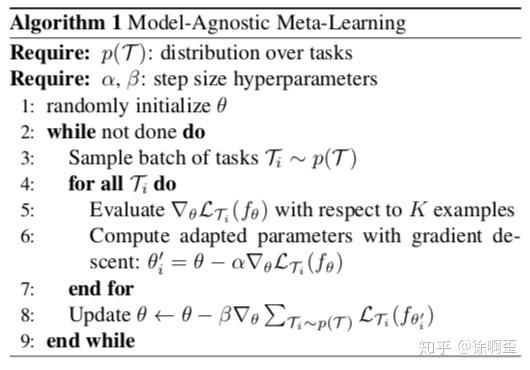

Why is the deterministic view of meta-learning not sufficient? This blog post is my attempt to demystify the probabilistic view and answer these key questions: The probabilistic view incorporates Bayesian inference: we perform a maximum likelihood inference over the task-specific parameters ϕᵢ - assuming that we have the training dataset Dᵢᵗʳ and a set of meta-parameters θ. The deterministic view is straightforward: we take as input a training data set Dᵗʳ, a test data point, and the meta-parameters θ to produce the label corresponding to that test input. I also mentioned in the post that there are two views of the meta-learning problem: a deterministic view and a probabilistic view, according to Chelsea Finn. As shown, P-MAML with KD improves the performance of one-shot learning as high as 10% in comparison to that without KD.In my previous post, “ Meta-Learning Is All You Need,” I discussed the motivation for the meta-learning paradigm, explained the mathematical underpinning, and reviewed the three approaches to design a meta-learning algorithm (namely, black-box, optimization-based, and non-parametric). Extensive experimental results on three real datasets show that our P-MAML algorithm greatly enhances the accuracy through KD from the teacher network. To the best of our knowledge, this is the first work to consider a portable meta-learning model through knowledge distillation (KD) to learn a good initialization. Moreover, data augmentation and ResNet architecture are employed in the teacher MAML network so as to avoid overfitting and enhance efficiency. We propose a novel approach named portable model-agnostic meta-learning (P-MAML), where valuable knowledge is distilled from a teacher MAML network to a portable student MAML.

In this paper, we investigate how to improve the performance of a portable MAML network so that it can be used in handheld devices, such as small robots, mobile phones, and laptops. Recently, model-agnostic meta-learning (MAML) and its variants have drawn much attention in few-shot learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed